Ai Weiwei is Living in Our Future

Living under permanent surveillance and what that means for our freedom

This talk was delivered on December 30th at 31C3 in Hamburg.

Ai Weiwei has been living in our future. His movements are restricted and he is structurally being watched by the government. He lives in a world without privacy. A world without privacy is a world without freedom.

He is one of the most important artists in the world. You probably know him through his contribution to the Bird’s Nest stadium in Beijing.

Or because of the way he deconstructed the Western trade relationship with China and its labor conditions in his masterful installation at the Tate Modern in London where he put millions of handmade porcelain sunflower seeds on display.

Ai Weiwei is very outspoken about the Chinese government and its freedom limiting policies. On April 3rd 2011 the Chinese government had enough and arrested him on trumped up charges of tax evasion.

For more than 65 days he was locked in a small cell with two guards from the People’s Liberation Army watching him 24/7 from up close. They even stayed on his side when he showered or went to the toilet.

At the Venice Biennale he showcased six large metal boxes recreating scenes from his imprisonment. As a visitor you are forced in the role of a person watching the guards as they watch Ai Weiwei.

After 81 days of staying in a cell he was released and could return to his home and studio in Beijing. His freedom was very limited: they took away his passport, put him under house arrest, forbid him to talk with journalists about his arrest, forced him to stop using social media and put up camera’s all around his house.

Andreas Johnsen, a Danish filmmaker, has made a fantastic documentary about the first year of house arrest. This is the trailer of ‘Ai Weiwei: The Fake Case’:

A fascinating element of the documentary is that you can see Ai Weiwei constantly experimenting with coping strategies for when you are under permanent surveillance. At some point, for example, he decided to put up four cameras inside his house and livestream his life to the Internet. This made the authorities very nervous and within a few days the ‘WeiWeiCam’ was taken offline.

Close to his house there is a parking lot where Ai Weiwei regularly catches some fresh air and walks in circles to stay in shape. He knows he is being watched and is constantly on the lookout for the people watching him. In a very funny scene he sees two undercover policemen observing him from a terrace on the first floor of a restaurant. He rushes into the restaurant and climbs the stairs. He stands next to the table that seats the agents, who at this point try and hide their tele-lensed cameras and look very uncomfortable. Ai Weiwei turns to the camera and says: “If you had to keep a watch on me, wouldn’t this be the ideal spot?”

This scene shows how being responsible for watching somebody isn’t a pleasant job at all. The young boys that had to guard Ai Weiwei in his cell had to stand completely still, weren’t allowed to talk and couldn’t even blink their eyes. According to Ai Weiwei their bones made cracking noises when they were finally allowed to move again at the end of their shift. This obviously is an inhumane practice and I wasn’t surprised to learn that these boys, even though there were cameras everywhere, still found a way to communicate with Ai Weiwei and regain some of their humanity. During the few moments that there was some movement in the cell, like when walking to the shower, they asked him questions with their lips tightly closed. Just like ventriloquists.

The people watching are, in some ways, imprisoned too. Gavin John Douglas Smith, in an article titled “Empowered watchers or disempowered workers”, convincingly shows how powerless most CCTV-operators feel. They are forced to look at situations without any way of influencing them, whenever something important happens they are pushed aside by somebody higher in rank, and they have zero freedom as they have to strictly follow procedure. They are actually being used as a small piece of human cognitive processing inside a giant automated surveillance system. They have to do the pattern recognition that computers aren’t capable of yet. We’ll get back to this theme later on.

Of course Ai Weiwei’s current life isn’t completely the same as our future lives. He is permanently and actively watched, whereas we will be permanently and passively watched. He is a public persona with hundreds of thousands of followers, so the Chinese government has to be a bit careful with him. That won’t be the case for most of us. But for me it is a fact that, unless we do something, our world will look more and more like his world. This is Ai Weiwei on how that feels:

“The individual under this kind of life, with no rights, has absolutely no power in this land, how can they even ask you for creativity? Or imagination, or courage or passion?”

Most of us aren’t fully aware of how truly all-encompassing the current surveillance infrastructure is and how quickly we are making it larger still. We often don’t realize how much the technology can already do today and how we are letting it play a large part in our lives.

A few months ago we heard that Digital Globe, the company that supplies Google Maps with its satellite imagery, had launched a new satellite into orbit. It can record images with a resolution of 25 centimeter per pixel and maps 680.000 square kilometers per day. This is a commercial satellite. The US Army is probably already using satellites with a higher resolution and with more capacity. Fact is that you now never know if you are being watched from the sky.

The algorithms for facial recognition are getting better every day too. In another recent news story we heard how Neil Stammer, a juggler who had been on the run for 14 years, was finally caught. How did they catch him? An agent who was testing a piece of software for detecting passport fraud, decided to try his luck by using the facial recognition module of the software on the FBI’s collection of ‘Wanted’ posters. Neil’s picture matched the passport photo of somebody with a different name. That’s how they found Neil, who had been living as an English teacher in Nepal for many years. Apparently the algorithm has no problems matching a 14 year old picture with a picture taken today. Although it is great that they’ve managed to arrest somebody who is suspected of child abuse, it is worrying that it doesn’t seem like there are any safeguards making sure that a random American agent can’t use the database of pictures of suspects to test a piece of software.

Knowing this, it should come as no surprise that we have learned from the Snowden leaks that the National Security Agency (NSA) stores pictures at a massive scale and tries to find faces inside of them. Their ‘Wellspring’ program checks emails and other pieces of communication and shows them when it thinks there is a passport photo inside of them. One of the technologies the NSA uses for this feat is made by Pittsburgh Pattern Recognition (‘PittPatt’), now owned by Google. We underestimate how much a company like Google is already part of the military industrial complex. I therefore can’t resist showing a new piece of Google technology: the military robot ‘WildCat’ made by Boston Dynamics which was bought by Google in December 2013:

In the case of facial recognition it is clear: the government delivers the pictures of faces, and commercial companies deliver the smart algorithms that make sure a face is also recognized when the angle is a bit tricky. In a similar way the NSA can now use software to look at outdoor pictures and match them to satellite imagery to find the location where they were taken.

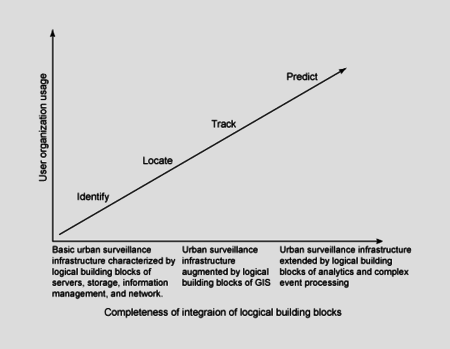

The ultimate goal of these government agencies is clearly shown in IBM’s ‘maturity model’ for ‘urban surveillance infrastructure’. Not only should it be possible to identify you on the street, to locate and to track you. In the end they want to predict what you are going to do.

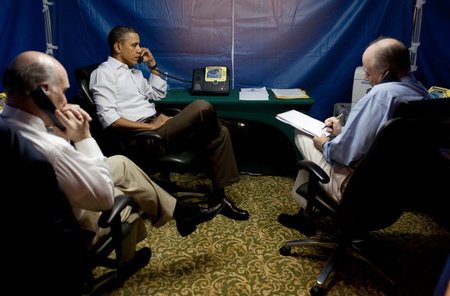

So what can you do to escape ubiquitous government surveillance? We know how Obama tries to do it. Whenever he is outside the US and needs to have a private conversation or read a secret document he will go to a special hotel room that has an opaque-walled tent that constantly emits noise. Only there can he be sure no one is watching or listening.

It is not only the government who is following us and trying to influence our behavior. In fact, it is the standard business model of the Internet. Our behaviour on the Internet is nearly always mediated by a third party. Facebook and WhatsApp sit between you and your best friend, Spotify sits between you and Beyoncé, Netflix sits between you and Breaking Bad and Amazon sits between you and however many Shades of Grey. The biggest commercial intermediary is Google who by now decides, among other things how I walk from the station to the theatre, in which way I will treat the symptoms of my cold, whether an email I’ve sent to somebody else should be marked as spam, where best I can book a hotel, and whether or not I have an appointment next week Thursday.

Companies who have to earn our money in the physical world, in ‘meatspace’, are making quick progress digitizing our interactions with them so that they can catch up with their tracking capabilities. The frontrunner is Disney, the themepark that, after years of hardcore children-focused marketing, ellicits the following response:

Disney nowadays has so-called ‘MagicBands’, a personalised wristband (your name is on it) with a built-in RFID chip. According to Disney this device is “the key to making your experience INCREDIBLE!”. The web is full on of ‘unboxing’ videos in which people unpack the wristbands weeks before their planned holiday to Disneyworld.

You can use the wristband to enter your hotel room, to gain access to the themeparks, to check in at the ‘Fastpass’ entrances, to connect your ‘photo pass’ to your online account and of course, first and foremost, to pay everywhere with the least amount of friction possible. The bonus for Disney is that they now finally know where all their customers are and precisely how much they are spending. It is only a matter of time before the park adjusts itself to the person with the wristband, creating a personalized experience that seamlessly matches your financial powers.

At a micro-level we are all Disney. The market for tracking devices for children and pets is exploding. An illustrative example is Tagg’s ‘Pet Tracker’.

Put a collar with a GPS chip around your dog’s neck and from that moment onwards you will be able to follow your dog on an online map and get a notification on your phone whenever your dog is outside a certain area. You want to take good care of your dog, so it shouldn’t be a surprise that the collar also functions as a fitness tracker. Now you can set your dog goals and check out graphs with trend lines. It is as Bruce Sterling says: “You are Fluffy’s Zuckerberg”.

What we are doing to our pets, we are also doing to our children.

The ‘Amber Alert’, for example, is incredibly similar to the Pet Tracker. Its users are very happy: “It’s comforting to look at the app and know everyone is where they are supposed to be!” and “The ability to pull out my phone and instantly monitor my son’s location, takes child safety to a whole new level.” In case you were wondering, it is ‘School Ready’ with a silent mode for educational settings.

Then there is ‘The Canary Project’ which focuses on American teens with a driver’s license. If your child is calling somebody, texting or tweeting behind the wheel, you will be instantly notified. You will also get a notification if your child is speeding or is outside the agreed-on territory.

If your child is ignoring your calls and doesn’t reply to your texts, you can use the ‘Ignore no more’ app. It will lock your child’s phone until they call you back. This clearly shows that most surveillance is about control. Control is the reason why we take pleasure in surveilling ourselves more and more.

I won’t go into the ‘Quantified Self’ movement and our tendency to put an endless amount of sensors on our body attempting to get “self knowlegde through numbers”. As we have already taken the next step towards control: algorithmic punishment if we don’t stick to our promises or reach our own goals.

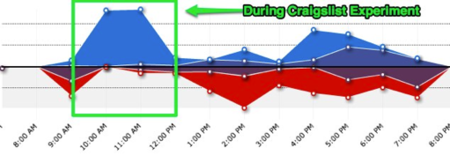

Meet Manish Seti, a blogger who describes himself as follows: “I studied at Stanford, traveled the world for 4 years, learned 5 languages, founded an NGO in India, became a famous DJ in Berlin, and want to show you how to build a digital nomad lifestyle.” In late 2012 he used Craigslist to hire somebody to help him improve his productivity. The idea was that the person would come sit next to him and give him a slap whenever he would not be working but checking Facebook or Reddit instead. It looked like this:

Normally his self-measured productivity would average around 40%, but with Kara next to him, his productiviy shot upward to 98%. So what do you do with that lesson? You create a wristband that shocks you whenever you fail to keep to your own plan. The wristband integrates well, of course, with other apps in your “productivity ecosystem”.

“Any developer can use Pavlok’s open API to increase compliance and improve communication with users. For designers of tracking apps, other wearable devices, digital course producers, or anyone who could use positive and negative feedback to drive stickiness for their service, Pavlok provides a seamless solution.”

Yes, you call your wristband ‘Pavlok’.

Writers of so-called ‘near future’ fiction are having a tough time. Their extrapolations of the now towards the future are quickly overtaken by current reality.

Take for example ‘Super Sad True Love Story’, my favorite Gary Shteyngart novel. Lenny, the protagonist, is considered a bit dirty because he still has paper books in his house. He lives in a world where the public sector has been integrated into the private sector (his girlfriend studies ‘Art and Finance’ at ‘HSBC-Goldsmiths’ in London) and where the US dollar has been pegged to the Yuan to battle inflation. Everybody carries an ‘äppärät’ with a ‘GlobalTeens’ account. Exhibitionism is rife with everybody constantly livestreaming their lives.

The book is full of unforgettable scenes in which Shteyngart takes aim at our current relationship with technology. Lenny is in the ‘Indefinite Life Extension’ business and after a year’s stay in Italy he has to return to the United States. Before he can travel he has to get a return-visa at the ‘American Restauration Authority’. They tell him to turn off all the security on his äppärät after which a Disney-type Otter figure appears on the screen to interrogate him. After a couple of questions about his work and his credit-rating the following happens:

“Lenny, did you meet any nice _foreign_ people during your stay abroad?”

“Yes,” Lenny said.

“What kind of people?”

“Some Italians.”

“You said ‘Somalians.’”

“Some Italians,” Lenny said.

“You said ‘Somalians,’” the otter insisted.

From there on it is only downhill for Lenny. His äppärät has ‘RateMePlus’ functionality giving him instant feedback about how the people in his direct environment see him. In a bar he gets a notification that his ‘fuckability rating’ is very low: out of the forty men in the bar, he is number forty.

Shteyngart wrote this book well before we had Grindr…

and Tinder…

…on our phones.

And even though he clearly foresaw something like ‘Bang with Friends’ (now renamed to ‘DOWN’), I am sure he still must have been a bit surprised when he learned of this service which asks you which of your Facebook friends you would like to sleep with. If the other party shares the sentiment then it is simple: “Get dates or get down!”

Dave Eggers describes the life of Mae in his dystopian novel ‘The Circle’. Mae works for ‘The Circle’, an Internet company that got its success through inventing an identity service which can be used all over the net. Transparency is the core value at The Circle, everybody is better off with transparency. The company’s leaders are convinced everybody should be watched all the time, as that keeps us all honest. Every rational person knows, after a bit of thought, that you should have nothing to hide: the things you want to hide are usually good for you, but not for the rest of the world. ‘Sharing is caring’ and ‘privacy is theft’: if you keep something for yourself, nobody else can benefit.

At the start of the book, the company presents an innovation to its staff: a small camera, the size of a lollipop, which can be placed in a unobtrusive manner anywhere. It streams its HD recording straight to the net. In its current iteration the battery will last for two years only, but they are already working on a version that powers itself using solar energy. The cameras aren’t only useful to see from a distance whether the waves are high enough to go surfing, they also have an effect on human rights. They can be used to force ‘accountability’. The Circle has already put cameras in Cairo, so that protesters don’t have to film the missteps of the Egyptian army themselves. They have also put 50 cameras on Tiananmen Square in Beijing so that the next person to step in front of a tank can be shown live and from multiple angles. The camera is dubbed ‘SeeChange’. At some point in the book politicians start to hang SeeChange cameras around their neck, going ‘transparent’. There is immediate pressure on all the other politicians to do the same: what do they have to hide? The camera’s slogan is “All that happens must be known”.

In this case too, reality couldn’t stay behind. You can buy the ‘Narrative’ right now, a ‘lifelogging’ camera. This promotion video explains the concept:

On Kickstarter the makers of the ‘Blink’ camera tried to crowdfund 200.000 dollars for their invention. They received over one millions dollars instead. The camera is completely wireless, has a battery that lasts a year and streams HD video straight to your phone.

The final example is the ‘Flone’, an easy to print frame allowing you to convert your smartphone into a camera-drone. It should be clear by now that it is only a matter of time before the storage and power technologies have advanced far enough to continuously film everything and to store it forever.

Technology thinkers like Kevin Kelly and David Brin have told us since the middle of the nineties: these technological developments cannot be stopped, there will be cameras everywhere pumping their data into the network. So we can choose: do we want to live in a world where only the government (police and secret services) has access to all this data (a panopticon) or should we opt for a world where everybody can keep everybody accountable because everybody has access to all cameras. Kelly calls this latter scenario ‘coveillance’, an attempt to make all tracking and surveillance as symmetric as possible.

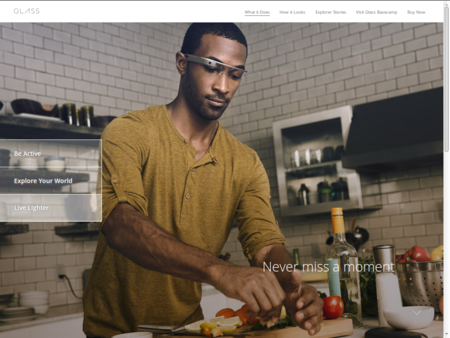

And that takes us to the Google Glass, the ultimate ‘looking-back-at-the-state-machine’.

With a Glass you can start filming at every moment while the world you are seeing is contextualized with useful information. Please watch this video that was put online a few months ago by Google Glass ‘explorer’ Sarah Slocum:

I would love to speak about the problems of gentrification in San Francisco, or about a culture where nobody thinks you are crazy when you utter the sentence “Don’t touch me, I’ll fucking sue you” or about the fact this Google Glass user apparently wasn’t ashamed enough about this interaction to not post this video online. But I am going to talk about two other things: the first-person perspective and the illusionary symmetry of the Google Glass.

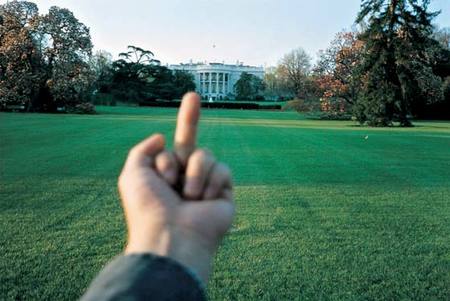

First the perspective from which this video was filmed. When I saw the video for the first time I was completely fascinated by her own hand which can be seen a few times and at some point flips the bird.

This reply to agression has been explored earlier by Ai Weiwei. In his ‘Studies of Perspective’ he gives the finger to the American government…

The French government…

And the goverment of his home country.

The perspective reminded me personally of the first-person shooters that I used to play when I was smaller. At the bottom of the screen you could always see what weapon you were carrying.

When Michael Brown, an unarmed black teenager, was shot by a police officer last August in clear daylight, there were many calls for the police to permanently start wearing so called ‘bodycams’. Sarah Slocum’s video and the screenshot from the Doom computer game suddenly made me realize that it is only matter of time before we, as citizens, will see a video shot from the first-person perspective of a police officer. At the bottom of the screen you will see a gun instead of a middle finger. And you will see somebody getting shot. Whether the video will be used to prove that the police violence wasn’t proportional or to prove that the victim was the true aggressor is less relevant than the fact that this type of video material will exist and that we will be watching it.

Is this then the world that Brin and Kelly write about?

The American Civil Liberties Union (also known as the ACLU) released a report late last year listing the advantages and disadvantages of bodycams. The privacy concerns of the people who will be filmed voluntarily or involuntarily and of the police officers themselves (remember Ai Weiwei’s guards who were continually watched) are weighed against the impact bodycams might have in combatting arbitrary police violence.

The report tries to answer many complex questions. Should the police officer be able to decide when the camera is recording? ACLU’s answer: no. Instead it would be great if the camera turns itself on in situations where it thinks it might be necessary to do so. In what situations should the police officer request permission to film? Who has access to the video? How long should they be stored? How do we make sure that the videos aren’t abused (in the case of celebrities for example)? And when do the videos have to made public? We all need to start thinking a bit deeper about these types of technologies.

Back to the Google Glass video of Slocum. As I’ve written earlier, many people see the technology as the great equalizer, a piece of grassroots technology that levels the assymmetry between the owners of the CCTV cameras and the citizens. Nothing is further from the truth. All the video that is recorded by any Google Glass user is immediately uploaded to Google’s servers, where Google aggregates all the information, analyses it and uses it to increase its understanding of the world.

Wearing a Glass, you are a sensor in Google’s network, and a smart and cheap one at that (you actually pay Google to be allowed to be a sensor). As soon as the battery is finished you put the Glass on the charger and with your two legs you can reach places that are difficult for Google’s ‘streetview’ cars. Of course you get something back for the effort, but in the end you are just a tiny cog in Google’s global system. You are ‘working’ for Google and doing exactly the things that computers aren’t yet good at.

Very recently I found another way in which Google appropriates my pattern recognition abilities to further its own cause. I had to fill in a ‘CAPTCHA’.

CAPTCHA’s are used to battle spambots. By recognizing a pattern that isn’t easy to read for a computer, you are proving that you are a human. Millions of CAPTCHA’s are filled in every hour. Luis von Ahn, a computer scientist, didn’t want to waste this cognitive capacity and invented the ‘reCAPTCHA’.

Through typing in hard to read words you helped to digitize newspaper archives and books. You always had to type in two words: one that the computer would already know and another which the computer would be a bit uncertain about. Google bought reCAPTCHA in 2009.

A short while ago I noticed that you didn’t have to type in book texts anymore when filling in a reCAPTCHA. Nowadays you type in house numbers helping Google, without them asking you, to further digitize the physical world.

We can see this phenomenon in a growing number of work situations: the computer and its algorithms, ‘the system’, does as much as possible and us humans only have to jump in for the few things that artificial intelligence cannot handle.

I heard the most saddening example of turning humans into robots in an episode of the Radiolab podcast. An employee at a large shipping warehouse in the US talked about how she had to pick orders for large online retailers (think Amazon). The different products are randomly spread throughout the warehouse (for efficiency reasons that are hard to understand for us humans). Many different products are stored in one box. As soon as an order reaches the warehouse, the different products that need to be picked for the order are assigned to as many different people as possible (a bit like parallel processing). The logistical system calculates these assignments in such a way that all products in an order reach the end of the picking process at exactly the same time. This means that the people inside this picking system have to run around the warehouse with a barcode scanner which shouts instructions at them. As soon as they reach the box which should contain the product, they have 15 seconds to find the product in the box. The scanner starts counting out loud: fifteen, fourteen, thirteen… If you haven’t found the product at zero, the scanner will start to count the seconds that you are late: one, two, three, four… Ocassionally there will be a mistake in the inventory and a product will be missing in the box. To prove to the system that the product really isn’t there, you then have to offer each and every product to the scanner (while it continues to count out loud). The system stores the percentage of products that you manage to find on time. Your lunchtime is exactly 29 minutes. You are fired on the spot if you take a 31 minute lunch as that messes with the planning capabilities of the system.

This is the implicit view on humanity that the the big tech monopolies have: an extremely cheap source of labour which can be brought to a high level of productivity through the smart use of machines. To really understand how this works we need to take a short detour to the gambling machines in Las Vegas.

Natasha Dow Schüll has spent more than 10 years as a cultural anthropologist studying all aspects of the slot machine business. She has written a phenomenal book about her experiences: ‘Addiction by Design’. In it, she clearly shows how the slot machine industry has designed the complete process (the casinos, the machines themselves, the odds, etc.) to get people as quickly as possible into ‘the zone’. The player is seen as an ‘asset’ for which the ‘time on device’ has to be as long as possible, so that the ‘player productivity’ is as high as possible.

The book is full of baffling anecdotes of people who cannot disconnect from the machine. Like an old lady playing while wearing multiple pairs of pants so that nobody can notice that she doesn’t take the time to go to the toilets.

The casinos were the first industry to embrace the use of AEDs (automatic defibrillators). Before they started using them, the ambulance staff was usually too late whenever somebody had a heart attack: they are only allowed to use the back entrance (who will enter a casino when there is an ambulance in front of the door?) and casinos are purposefully designed so that you easily lose your way. Dow Schüll describes how she is with a salesperson for AEDs looking at a video of somebody getting a heart attack behind a slot machine. The man falls off his stool onto the person sitting next to him. That person leans to the side a little so that the man can continue his way to the ground and plays on. While the security staff is sounding the alarm and starts working the AED, there is literally nobody who is looking up from their gambling machine, everybody just continues playing.

This sort of reminds me of the feeling I often have when people around me are busy with Facebook on their phone. The feeling that it makes no difference what I do to get the person’s attention, that all of their attention is captured by Facebook. We shouldn’t be surprised by that. Facebook is very much like a virtual casino abusing the same cognitive weaknesses as the real casinos. The Facebook user is seen as an ‘asset’ of which the ‘time on service’ has to be made a long as possible, so that the ‘user productivity’ is as high as possible. Facebook is a machine that seduces you to keep clicking on the ‘like’ button.

Our relationship with Facebook, Google and Amazon isn’t symmetrical. We have no power to define the relationship and have zero say in how things work. If this is how commercial companies treat humanity, what can we expect from governments that are increasingly normative in what they expect from their citizens? Our governments have been taken hostage by the same logic of productivity that commercial companies use. With the inescapable number of cameras and other sensors in the public space they will soon have the means to enforce absolute compliance. I am therefore not a strong believer in the ‘sousveillance’ and ‘coveillance’ discourse. I think we need to solve this problem in another way.

How? An answer to that question would probably require another speech. But I’d like to point in what I think is the right direction. And for that, we need to start with Nassim Taleb, the ‘enfant terrible’ of the academic world.

Taleb has carefully cultivated the image of a bully for himself, somebody who likes to crush other people with his intellectual (and physical) powers. Recently he too discovered the power of the camera. In July he posted on Facebook about the “the magic of the camera in reestablishing civil/ethical behaviour”. I cite:

“The other day, in the NY subway corridor in front of the list of exits, I hesitated for a few seconds trying to get my bearings… A well dressed man started heaping insults at me ‘for stopping’. Instead of hitting him as I would have done in 1921, I pulled my cell and took his picture while calmly calling him a ‘Mean idiot abusive to lost persons’. He freaked out and ran away from me, hiding his face in his hands.”

Taleb has written one of the most important books of this century. It is called ‘Anti-fragile: Things That Gain from Disorder’ and it explores how you should act in a world that is becoming increasingly volatile. According to him, we have allowed efficiency thinking to optimize our world to such an extent that we have lost the flexibility and slack that is necessary for dealing with failure. This is why we can no longer handle any form of risk.

Paradoxically this leads to more repression and a less safe environment. Taleb illustrates this with an analogy about a child which is raised by its parents in a completely sterile environment having a perfect life without any hard times. That child will likely grow up with many allergies and will not be able to navigate the real world.

We need failure to be able to learn, we need inefficiency to be able to recover from mistakes, we have to take risks to make progress and so it is imperative to find a way to celebrate imperfection.

We can only keep some form of true freedom if we manage to do that. If we don’t, we will become cogs in the machines. I want to finish with a quote from Ai Weiwei:

“Freedom is a pretty strange thing. Once you’ve experienced it, it remains in your heart, and no one can take it away. Then, as an individual, you can be more powerful than a whole country.”

This text was originally delivered as a speech in Dutch. Credits for the images are available here. The sources used in the text are here.